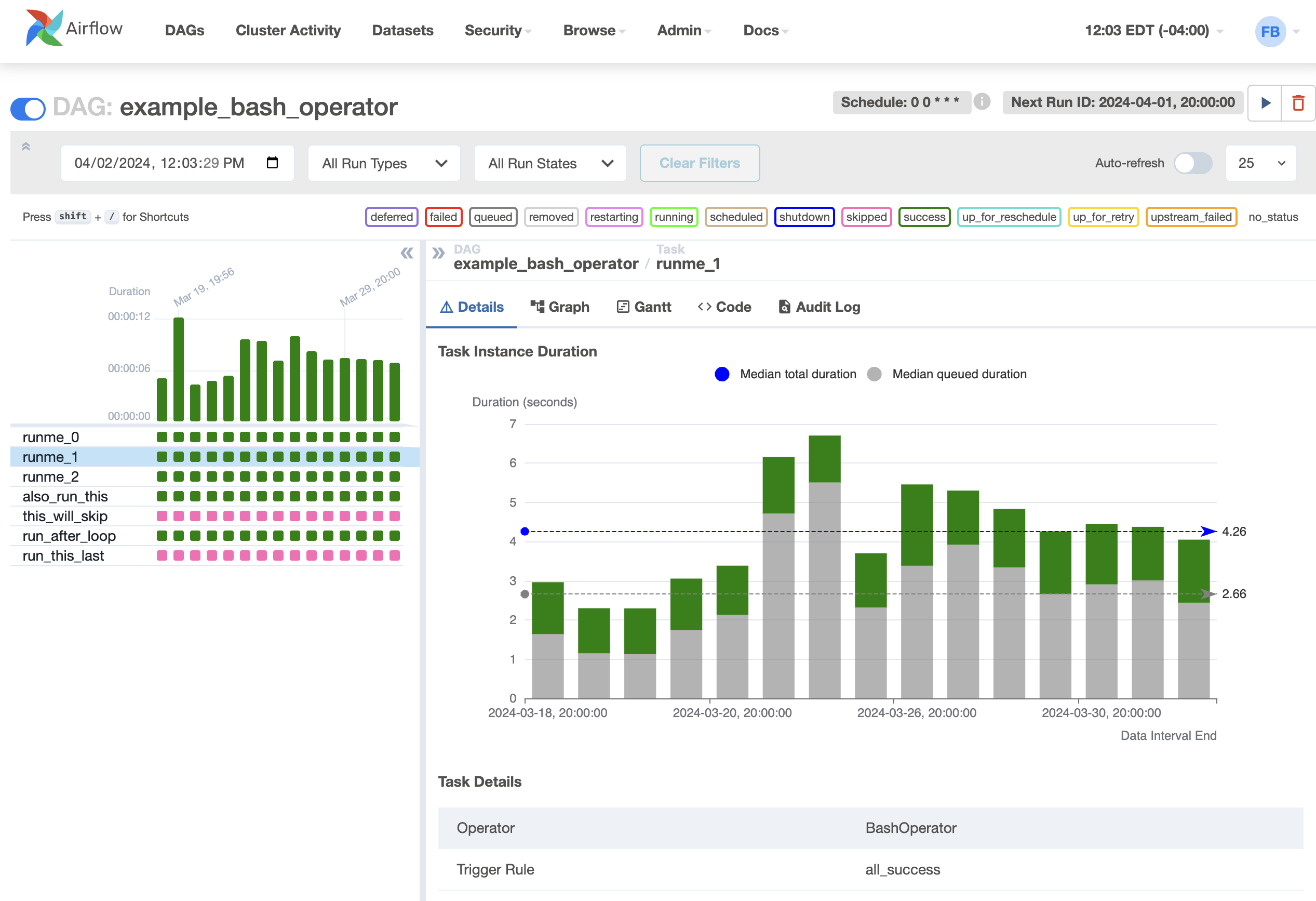

Here, we compose the op's print_date, sleep and templated to match the dependency structure defined by the Airflow operators t1, t2, and t3. Airflow Instance, click Airflow link to Open UI. This directly corresponds to a DAG in Airflow. To open an Airflow UI, Click on the 'Airflow' link under Airflow webserver. In Dagster, the computations defined in ops are composed in jobs, which define the sequence and dependency structure of the computations you want to execute.

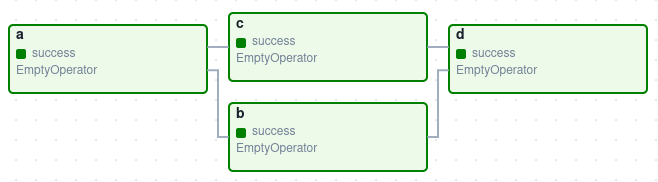

To configure the same behavior in Dagster, you can use op-level retry policies. In the tutorial DAG, the t2 operator allowed for three retries. We'll spin up the UI later in the tutorial, but wanted to demonstrate: Op-level retries # Which would yield the following graph of computations in the Dagster UI. sleep ( 5 ) def templated (context : OpExecutionContext, ds : datetime ) : for _i in range ( 5 ) :Ĭontext. lastautomateddatainterval is a :class: instance indicating the data interval of this DAG's previous non-manually-triggered run, or None if this is the first time ever the DAG is being scheduled. info (ds ) return (retry_policy =RetryPolicy (max_retries = 3 ), ins = ) def sleep ( ) : Here, we map the the operators of our example Airflow DAG t1, t2, and t3 to their respective Dagster def print_date (context : OpExecutionContext ) - > datetime :Ĭontext. This directly corresponds to an operator in Airflow. In Dagster, the minimum unit of computation is an op. With ops, the focus is on writing a graph with Python functions as nodes and data dependencies in between them as edges. DAGs are stored in the DAGs directory in Airflow, from this directory Airflows Scheduler looks for file names with dag or airflow strings and parses all the. This should feel familiar if you've used the Airflow Task API. Define the schedule - In Airflow, the schedule (how simple!)Ī Dagster job is made up of a graph of ops.Define the graph: the job - in Airflow, the DAG.Define the computations: the ops - in Airflow, the operators.

To rewrite this DAG in Dagster, we'll break it down into three parts: dag DAG ('mydag', description'this is what it does', scheduleinterval'0 12 ', startdatedatetime (2017, 10, 1), catchupFalse) I then need to use the 'date' as a parameter in my actual process, so I just. Schedule_interval =timedelta (days = 1 ) , When building an Airflow dag, I typically specify a simple schedule to run periodically - I expect this is the most common use. From datetime import datetime, timedeltaįrom airflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed